Walleye

Simon Penny

(technical assistance Tom Jennings)

2006-2009 – unfinished

Walleye is an interactive light installation which engages users in temporal gestural and bodily play. It generates temporal light propagation patterns, or a large scale very low resolution pixilated image (depending on how you look at it) based on the realtime movement of visitors in the space. The project is informed by discourses around emergent complex behavior, active sensing, homeostasis, autopoiesis, structural coupling and enactive cognition.

Some key ideas about Walleye

Emergent Complexity and the semblance of Intelligence

WallEye it is an experiment in how much complex behavior or 'appearance of intelligence' one can elicit from an entirely horizontal, non hierarchical system - not even horizontal or distributed or rhizomatic but entirely unconnected - except through marginal environmental interactions, ie 'leakage' across emitter-sensor channels. In this sense it is a continuation of concerns around emergent complexity which I have explored in previous works such as Sympathetic Sentience - 1995-6 , http://ace.uci.edu/penny/works/sympathetic.html.

The semblance of Intelligence

The notion of a 'semblance of Intelligence' points back to ontological inquiries of Second Order Cybernetics, (von Foerster, von Glaserfeld et al) and early Autopoietic theory cf Humberto Maturana's aphorism 'everything said is said by an observer'. Not that these ideas originated there... "If there is no other, there will be no I. If there is no I, there will be none to make distinctions." Chuang-tsu, 4th Cent., B.C.

Electronic Arte Povera

contrary to the fetishism of resolution/bandwidth/computation, Walleye is an experiment into the ability of people to discern meaningful temporal/spatial pattern from ultra-low resolution display. This concern with thresholds of pattern recognition on human perception, specifically enactive perception, has been a theme I have pursued since Ceci N'est pas un Oiseau, 1989, http://ace.uci.edu/penny/works/ceci.html

Technological Parsimony

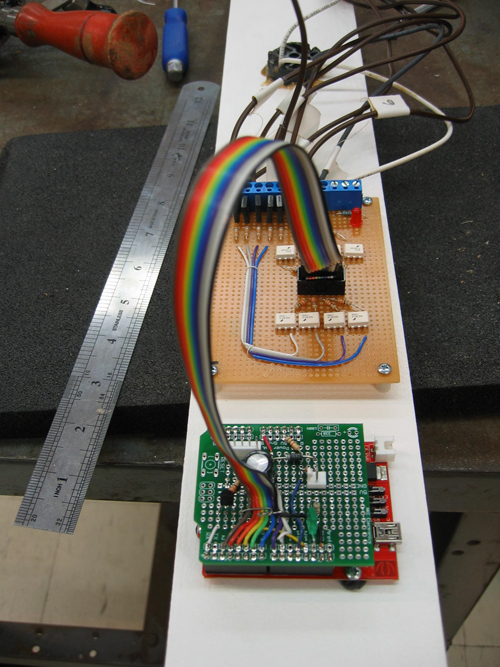

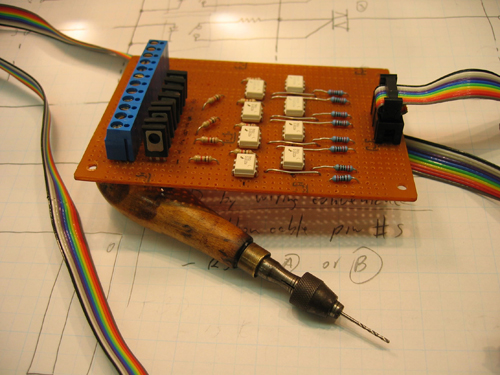

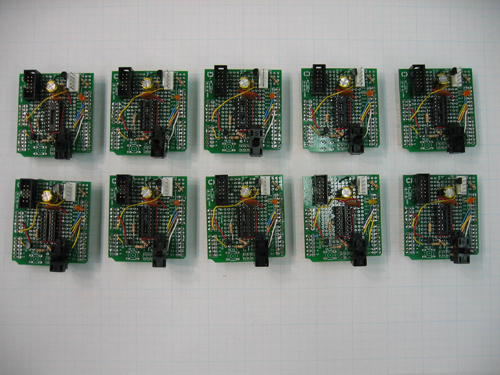

As a critical intervention into the accepted rhetoric of the 'general purpose machine', all the hardware (electronic and physical) is custom designed and built (with the exception of the 20 seeeduino microcontrollers). It would be possible to implement WallEye as a pro-cam system (video camera, computer, projector) but this would be contrary to the spirit of the project, both in the sense of technological excess and in terms of an implicit centralising hierarchical structure antithetical to the project.

Enactive Cognition and the Aesthetics of Behavior

The mode of engagement of Walleye (and interactive installations in general) involves, to a greater or lesser extent, embodied spatio-temporal exploration in an ongoing engagement with a semi-autonomous agent or agent-like system. This kind of cultural artifact which possesses the ability to respond to its environment is historically new, and therefore, so is this mode engagement. It is arguably without precedent in the history of the (plastic) arts and thus demand an entire new branch of aesthetics.

Walleye – Physical layout, Behavior and Technical R+D

Walleye, as an experiment in rhizomatic structuring of electro-physical process, required extensive design and development of custom software, circuitry and physical structure. The basic behavior of the system is that each sensor looks at its corresponding lamp. The brightness of the lamp is controlled by the brightness of the signal on the sensor – in a circular feedback loop.

On one wall is an array of photosensors spaced on a 10” grid. On the opposite wall is an array of incandescent bulbs at the same grids spacing. Each photosensor drives one lamp directly opposite it. It communicates only with that lamp and no other. Lowering of light to the sensor will result in lowering of light emitted by the corresponding lamp.

There is no global coordination of data. There is no 'image processing'. Yet the visitor sees a low resolution of themselves, more temporally coherent that spatially resolved. If they are moving close to the sensor wall, their image is more sharp, if they are moving closer to the lamp wall, their image is more diffuse.

The movement of visitors in the space in a sense perturbs an otherwise stable and quiescent system, sending disruptions rippling through the system, in ways that are analogous to neural propagation.

There will be some crosstalk between lamps and sensors. Each sensor will sense some light from lamps neighbouring the lamp it drives. So some global emergent patterning will arise. Deft tweaking of hardware and software variables will result in patterning which is engaging but not confusing to visitors. Some temporal treatment of data flow from sensor to lamp will result in delays, fades, delta reversals or similar behavior.

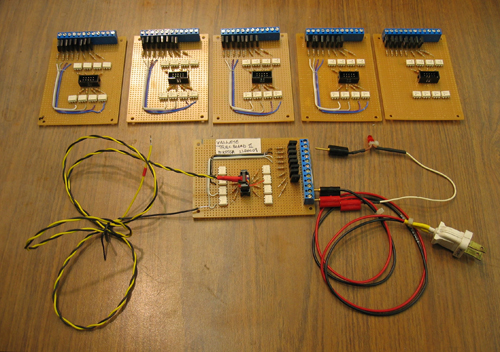

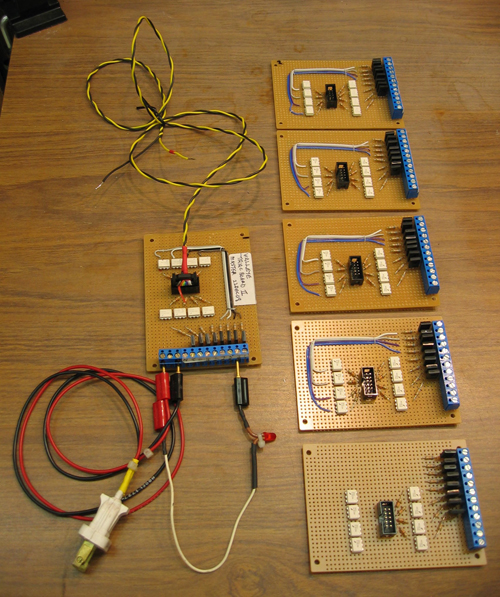

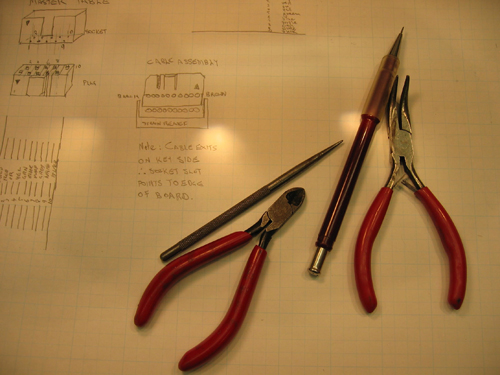

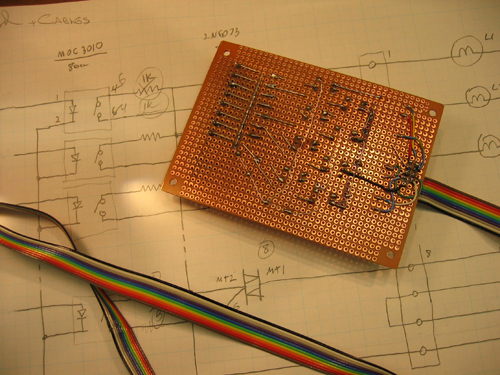

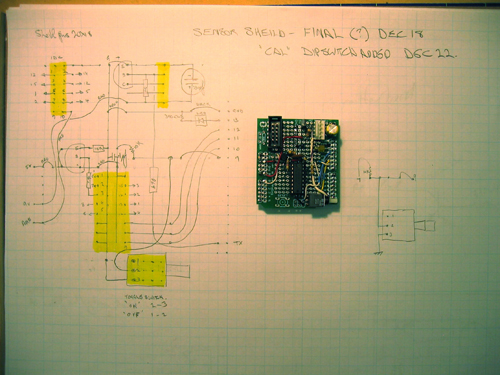

Custom technical R+D

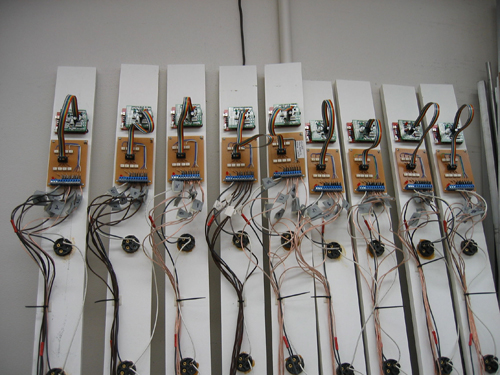

Sensors: discrete phototransistors were set in 1” square aluminium tube, through-holes functioned as focus tubes. 12 of these tubes were hung vertically with careful alignment which formed a 10” grid 12 feet in length, 8 ft high.

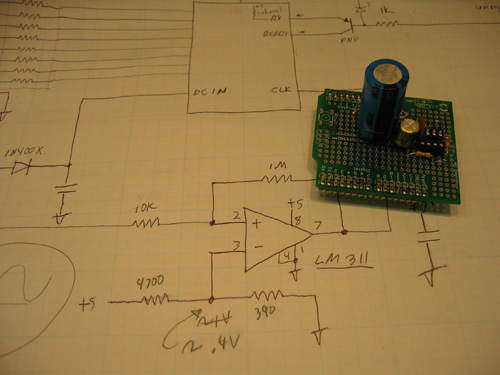

Lamps are arranged in similar vertical columns aligned squarely on the opposite wall8-12 ft away. Each column of 10 sensors is interfaced with a microcontroller which sends sensor values to the corresponding vertical lamp array. This sends preprocessed data to the microcontroller for the corresponding lamp column. Lamp brightness is achieved via a custom system using 60hz clocked PWM to drive a logic controlled triac. 24 Arduino microcontrollers were networked in the project, with custom circuitry and software developed for sensor data collection, digital 110v AC brightness control, AC waveform based clocking. This latter was neccessary to synchronise the triac control signals with the ACE waveform. Custom hardware was developed for sending the clock pulse on the DC power lines to the microcontrollers and extracting the clock pulse from the power line.

The demise of Walleye

After 2-3 years of prototyping of parts of the system, a full-scale setup was developed. It was only at this stage that it became clear that calibration of the system would require two-axis, micrometer precision alignment of sensors. Time and expense of re(building) the array of 120 sensors, each with such precision, was beyond the budget and time available. Exhibition of the project in March 2009 was, regrettably, cancelled.