Telepanoscope/Vivatars

aka

VAPID

(Vision Augmented Panoscope with Interactional Dynamics)Work in progress 2003-

The VAPID system is unique in that it will be a simple, comparatively low cost, lightweight, portable, multi-purpose immersive environment, with fully tested unencumbering vision based sensing, for use in embodied interactive video, embodied teleconfencing/telematics and embodied multi-user virtual worlds.

VAPID combines and builds upon two existing technologies, the Courchesne (et al) Panoscope and the Penny/Bernhardt TVS multicamera 3D machine vision system, to create an interactive immersive environment which will function as the basis for a variety of applications. It is ienvisaged that multiple telepanoscopes will interface with shared virtual environments.

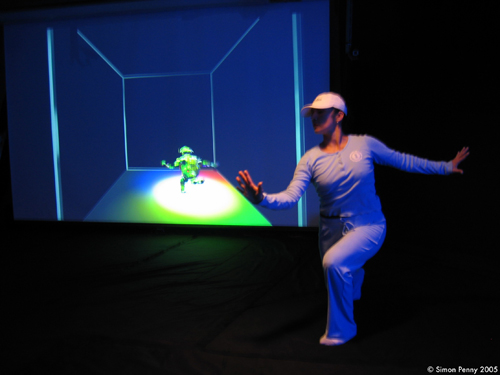

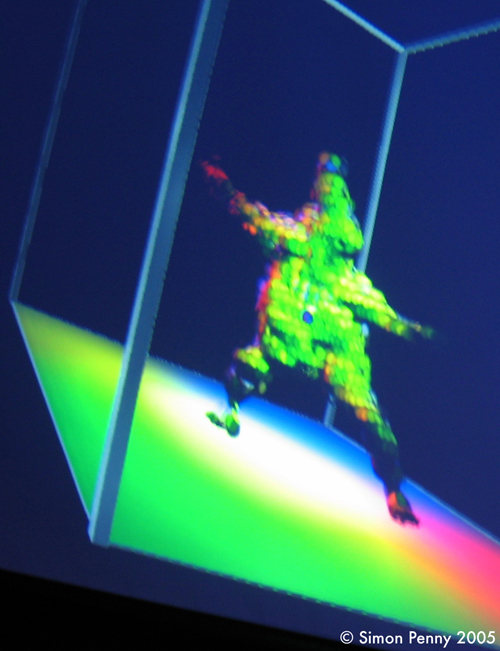

The system creates an immersive 3D world for a single user, allows unencumbered gesture based navigation and control, and generates a physically accurate realtime 3D avatar as user representation in virtual worlds. This in turn allows a range of embodied interaction in networked 3D worlds.

Panoscope is the general name of a panaoramic video system developed by Luc Courchesne, UQAM, Montreal. The particular Panoscope design I intend to use is an 8'x16' inflatable fabric hemisphere which functions as a walk-in immersive environment (see image). Courscesne uses a 180x360 fisheye lens mounted on a projector located centrally pointing down to display video caputered using the same lens. the user enters the panoscope by lifting the 'skirt' and stepping in through its 'hem', a 4' circle on the floor.

The Panoscope will be combined with the Penny/Bernhardt TVS multicamera 3D machine vision system. The Traces Vision System builds a realtime 3D model of the user from four camera images. This model can be utilised for the generation of anatomically accurate real time avatars, and for gesture based navigation. The projected image will also be visible from outside the panoscope.

Research partsThe following related projects (among others) will utilise the system and contribute to its development:

- Gesture driven interactive immersive video (VIVI: Vision-driven Immersive Video Interactive ?). The Traces system is installed in a panoscope allowing gesture (pointing) to drive video selection. Further gestural control is also possible. Key research area : development of specialised code for body segmentation/anatomical analysis of the traces system bodymodels and other interface development.

- Telepanoscope. Two to four Panoscopes with Traces vision allow 2-4 remote parties embodied telconferencing. The images from the vision system cameras are tiles onto four quadrants of the remote panoscopes. Key research area: multimodal low latency networking.

- Embodied Multi-User Virtual Environment: EMUVE. Real Time body models derived from the vision system in each panoscope are utilised as fully articulated avatars in shared virtual environments. The vision system would generate a real time 3d avatar which would be placed in the virtual world for the other participants to see/interact with. The user would navigate and interact via the vision system, with limited gesture recognition based on body segmentation code we are currently working on. An instrumented circular platform will function as a 2DoF virtual skateboard. Key Research Areas: Gesture languages, Real time rendering, Virtual Environment Design

- Luc Courschesne, UQAM, Montreal

- Andre Bernhardt, ZKM, Karlseruhe, Germany

Basic kit for all projects will include at least two panoscopes (structure, computer/video hardware and projector), along with two sets of the Traces Vision System, (four video cameras, two PCs, related hardware). Estimated cost of total kit (one instance) $10-15K, packable in a medium sized car trunk.