Phatus

A work in progress report2010-2011

Simon Penny

Overview

Phatus is an extended interdisciplinary project to build physically instantiated, physiologically inspired voice machines.

Aesthetically, the projects aims at an Artaudian theatre of machines, an assemblage of disquieting devices which laugh, cry, moan, rage and sigh.

In terms of intellectual and historical inquiry, the project is motivated by three related observations: - The vast majority of human voice research and research into voice synthesis through the C20th has been almost exclusively preoccupied with speech.

- Prior to the C20th, voice research for the previous 200 years had focused on the making of machines which emulate physiology(Kratzenstein, von Kempelen, Darwin, Wheatstone, Faber,Paget, etc.

- As with many science and engineering research agendas, since the late 19th Century, voice research has transitioned for a physical modeling practice to and analytically mathematical practice

Around the end of the C19th, the increasing mathematization of science and engineering, along with the development of generic audio recording and playback technologies (‘general purpose’ technologies which denied their own materiality and aspired to emulate any sound)led to a preoccupation with mathematical analysis and modeling of speech. Physiological research focused on the variation of sounds produced by fine manipulation of the mouth and upper vocal tract. This bias remained present through electro-mechanical, analog electronic and digital periods.

It was therefore an amusingly perverse project to build physically instantiated machines which emulate human bodily sounds.

Project History

I conceived of this project in 2006, after reflection on (computer) voice synthesis for many years. I received initial funding for the project from the UCIRA in 2009, but tangible work could not begin till 2010.By the end of summer 2010, substantial design and materials research, was done several prototype lung/bellows machines had been built, along with general and specific microcontroller-based electromechanical process control systems (with assistance from students Eileen Chang and Anthony Tamayo).

In Fall 2010 (Sept-Dec) I was able to take up a postponed residency at Northwestern University sponsored by the Segal Institute for Human Centered Design and the Alice Kaplan Institute for the Humanities. My intention for the residency was to build microcontroller driven, motor articulated vocal tracts and larynx emulators. During the fall, working with four student assistant – Kevin Bley, Aaron Horowitz, Brain Dodge and Nate Bartlett, with the expert support of Steve Jacobson), developing prototypes of larynx and vocal track devices began in earnest.

Research at Northwestern, fall 2010

We set out with several research trajectories —

- research tractable larynx substitutes

- build a motorised pitch controllable reed ‘larynx’

- build models of the vocal tract and test the way they modify the quality of the sound produced by larynx emulator

- build a flexible, manipulable vocal tract model

- build several new bellows machines

Over the fall, the following was achieved —

- after building numerous prototypes, research in to larynx simulators settled on bagpipe style ‘singe reed’ designs.

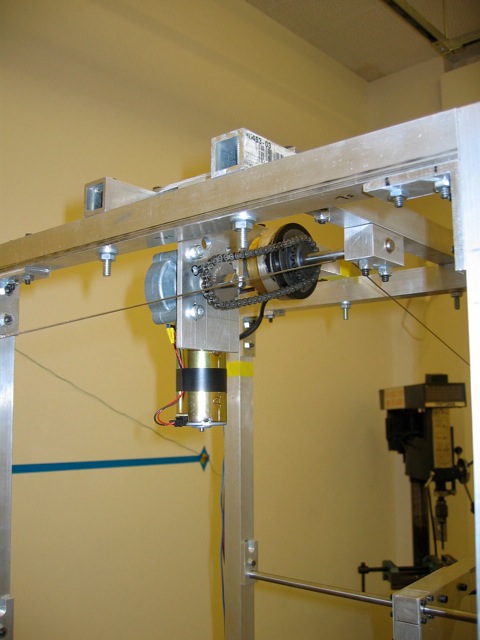

- prototypes of the motorised pitch controllable reed ‘larynx’ were built.

- idealized vocal tract vowel profiles were extracted from research papers, built as solid models and cast in various hard and flexible plastic casting media to experiment with sound quality etc.

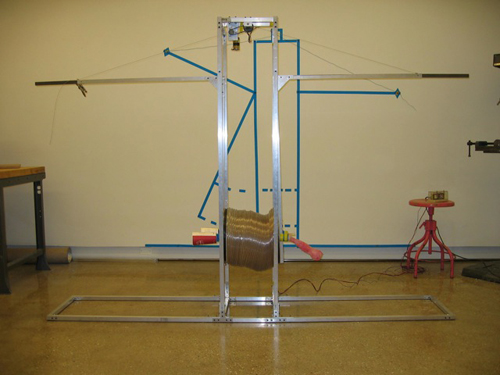

- a large new bellows machine was built, complete with reeds and vocal tract casts.

Reflections on Phatus year 1

As research progressed, several of these trajectories were abandoned for various reasons. It now appears that while fine real time control of the larynx device is important, similar control of the vocal cavity is less important – the sounds we are pursuing tend to occur with an open vocal tract, with little change of shape during the utterance. On the other hand, precise control of punctuated air delivery seems crucial. A new generation of devices will focus on more complex air delivery control.

In this process several things became clear. Most significantly, the sounds I am interested in are, evolutionarily, mammalian or primate sounds, as opposed to being especially human. Laughing and crying are convulsive. They arise largely as a result of thoracic contractions and minor larynx changes. They are bodily and muscular, and involve little fine mouth/vocal tract control. The implication is that they also are driven by different and older neurological pathways than speech, involving different brain centers.

Coming to this awareness changed the path of research somewhat – at he task of fine machine articulation of the vocal tract seems less crucial, and breath and larynx control seem more crucial. This realization has also led to a discovery regarding the history of voice research - not only has voice synthesis been largely unconcerned with affect, but there is an astonishing paucity of voice physiological research regarding affective voice. Across the whole field of phatic, non–semantic vocal sound (ie not including singing) we found (only) one paper on laughing. Considering that vocal affect contributes substantially to vocal communication between humans, a fact well understood in theatre and anthropology - this lack of research indicates the semantic preoccupations of the field, and a lack of attention to messy ‘subjective’ factors so typical of C20th science.

Status and current R+D - march 2011

The past year of R+D has provided an understanding of the problem of building pneumatico-mechanical phatic voice simulators. In the process, the design task has been imagined as in terms of iteratively finding a/the sweet-spot in a ‘design triangle’ defined by three nodes – the aesthetic goal(s), the physiological mimetic ‘target(s)’ and tractably fabricable mechanisms. At this point, the machines make silly noises vaguely reminiscent of human sounds, but some suspension of disbelief is required. In a Xeno’s paradoxical way, the production of persuasively human sounds may still be infinitely far away.